Replacing the research pile with a briefing agent

Turning a 4 hour research scramble into an AI-native workflow

I had wanted to build this for District 3 since the commercial AI wave kicked off in mid-2022. The admissions workflow was exactly the kind of process that made me think "this wouldn't exist like this if built today," but I never had the right opening to pursue it. Wealthsimple's AI Builder prompt finally gave me one.

output brief

The Bottleneck

District 3 is a publicly funded startup incubator at Concordia University in Montreal, processing roughly 200 to 250 applications per month. When an application lands, an operations team member spends one to four hours on background research: scouring LinkedIn, checking founder websites, mapping the competitive landscape, looking for patents and press. They write hasty bullet-point notes and assign a program stream. Stream leads walk into panel meetings and spend their first ten minutes just orienting themselves.

The research is the bottleneck. It's tedious and inconsistent. Two people reviewing the same application can produce dramatically different notes. The quality of the research dictates the quality of the panel's decision, yet it's treated as rote admin work.

This is the kind of process that evolved before modern AI. It wouldn't exist like this if built today.

understanding D3

what's broken

stakeholder mapping

drawing the boundary

the existing process

Before

- Application sits for days

- 1–4 hours of manual research

- Hasty, inconsistent bullet-point notes

- Stream leads walk in cold

- Quality depends on who's on shift

After

- Application triggers agent immediately

- ~18 minutes, ~$2.50 CAD in API costs

- 9-section cited brief with risk flags

- Stream leads arrive informed

- Consistent quality, every time

What I Built

I built an AI system that eliminates the research bottleneck. When a new application is submitted, an agent autonomously generates a research brief: founder profiles with verified sources, competitive analysis, SDG alignment assessment, stream classification with reasoning, a scored evaluation rubric, risk flags, and interview questions for both operations staff and panelists, all cited to real URLs or application fields.

The human can now open an application that's already deeply researched. Operations shifts from doing research to reviewing research. Stream leads arrive at panel meetings with context instead of spending their first ten minutes catching up.

The Brief: Nine Sections, All Cited

Every brief the agent produces contains nine sections, each with mandatory citations to URLs, application fields, or knowledge base documents:

| Section | Purpose |

|---|---|

| Synthesis | What the startup does, overall confidence score, and a plain-language recommendation |

| Founder Profiles | Per-founder background research with credibility signals and gaps |

| SDG Coherence | Assessment of whether claimed UN Sustainable Development Goals actually match the work |

| Competitive Context | Comparable ventures, market landscape, and differentiation analysis |

| Evaluation Scorecard | Each rubric criterion scored with justification and confidence level |

| Stream Classification | Best-fit D3 program stream and stage with reasoning |

| Key Risks | Red flags, gaps, and concerns ranked by severity |

| Questions for Ops | Gap-based questions for the operations team to investigate before interview |

| Questions for Panelists | Evaluation-based questions to probe during the interview itself |

Design Philosophy: Sandbox, Not Script

Version 1 of this system was a scripted pipeline, a chain of prompts with predetermined steps and retry logic. It broke constantly. If a LinkedIn page was down or a founder didn't have a website, the whole chain derailed. Wrong output was worse than no output, because it created more work for the ops team to correct.

For version 2, I replaced choreography with agentic design. The agent isn't given a task list. It's given an environment: knowledge to reference, tools to use, a goal to achieve, and guidelines for quality. Within that environment, the agent decides what to research, in what order, and how deep to go.

the sandbox

Knowledge

A /knowledge folder the agent reads at the start of every run, containing D3's mandate, evaluation rubric, stream definitions, and SDG framework. This is what makes it D3's agent, not a generic research bot.

Tools

Thirteen tools the agent can call in any order, from web research with multi-strategy fallback to self-assessment checkpoints, brief section emitters, human review flags, mid-run human input requests, and working memory for notes and research plans.

Goal

Produce nine brief sections, all cited. The agent knows what "done" looks like but has full autonomy over how to get there.

Guidelines

A quality bar, not step-by-step instructions. Every factual claim needs a citation. Confidence thresholds determine whether to proceed, retry, or flag for human review. And critically: zero revisions is a sign of a first-pass report.

How the Agent Works

agentic flow

The agent operates in a loop of research, assessment, and output. It loads D3's knowledge base, reads the application, creates a research plan, then executes that plan phase by phase. After each phase, it self-assesses its confidence and decides whether to proceed, retry with different sources, or flag for human review.

Brief sections are emitted incrementally as the agent completes them, not batched at the end. This means the brief builds in real time, and observers can watch the research unfold through a live server-sent event stream.

The Backtracking Loop

The key design insight. After each self-assessment, the agent reviews all previously emitted sections and asks: "Does anything I've already published need updating in light of what I just learned?" If the answer is yes, it revises the section and logs the reason. This is how the system produces an honest, non-linear brief rather than a first-pass report.

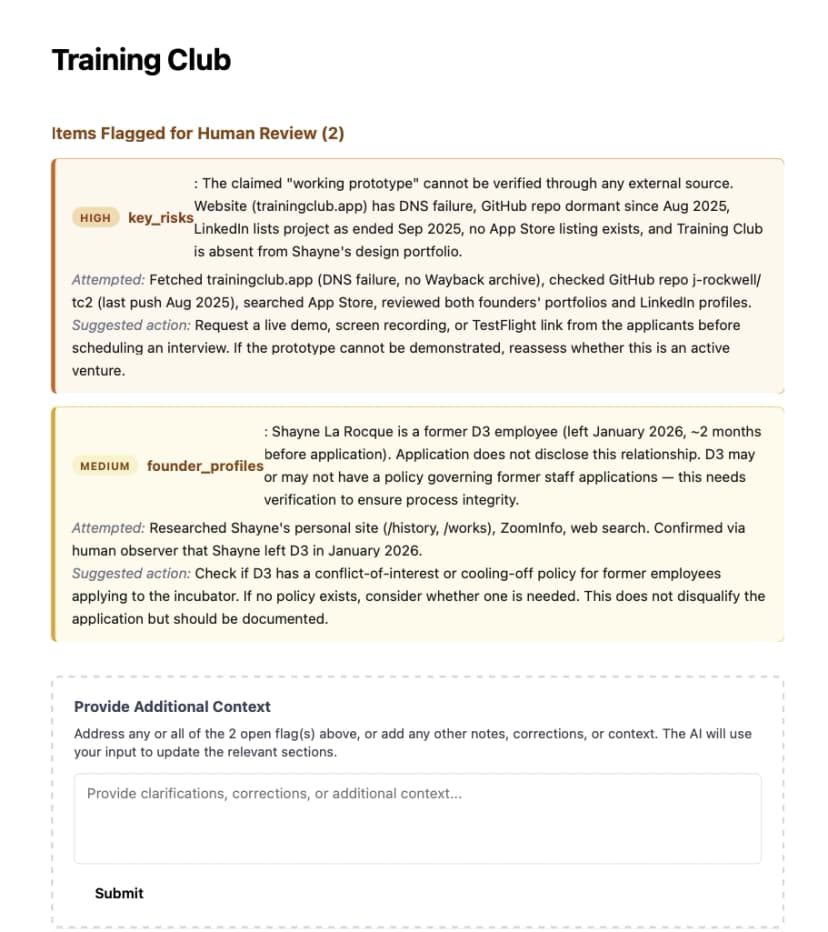

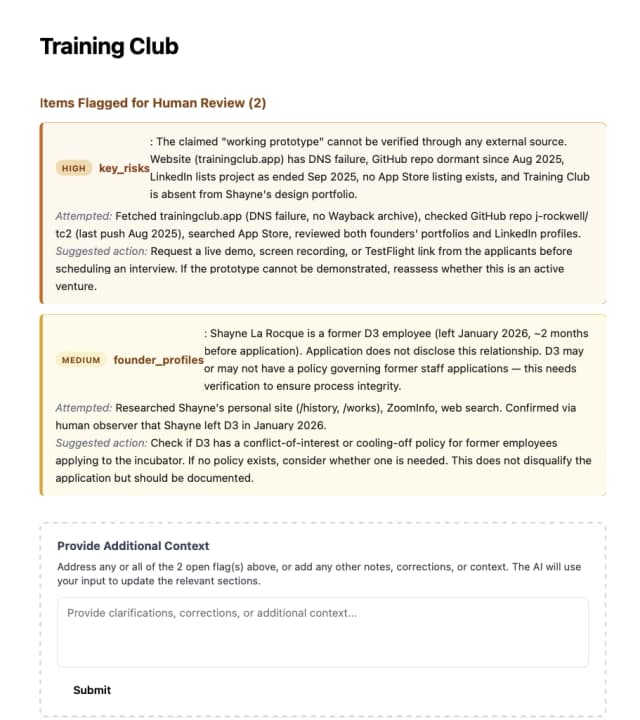

One Real Run

The useful artifact is not hidden chain-of-thought. It's the audit trail: what the agent tried, what failed, when it asked for help, and what brief it ultimately produced.

This is the literal run log: fetch failures, escalations, self-assessments, emitted sections, and final review flags.

brief preview

Nine cited sections, ranked flags, and a cleaner starting point for the ops team and panelists.

Download full brief PDFDownload raw logResearch Resilience: The Fetch Cascade

When the agent needs to research a URL, it doesn't just fetch and hope. It runs through a five-strategy cascade:

GitHub API

For github.com URLs, returns structured profile data: repos, stars, languages, bio

Direct HTTP Fetch

With browser headers, HTML extraction, meta tags, Next.js SSR/RSC data extraction, and structured navigation links

Agent-Driven Exploration

The tool returns navigation links from the page; the agent decides which sub-pages are relevant to its research question and fetches those

Jina Reader

Headless browser rendering for JavaScript-heavy SPAs that don't serve content in initial HTML

Wayback Machine

CDX API lookup for dead or blocked sites, fetches most recent archived snapshot

If all five strategies fail, the agent flags the gap honestly and moves on. It never fabricates. Even the research tool follows the sandbox pattern: the tool provides information (page content plus navigation links), the agent provides judgment (which links are worth following).

Real example from test run

LinkedIn returned a 429 rate-limit block for one founder. The agent fell back to the founder's personal website, ZoomInfo via web search, and GitHub API. It still assembled a comprehensive profile. Then it flagged the LinkedIn gap honestly in the brief so the ops team knew what wasn't checked.

Human-in-the-Loop: Two Touchpoints

The system has two distinct moments where humans interact with the agent's work, each designed for a different purpose.

During the Run: Phone-a-Friend

When the agent hits genuine ambiguity that would change its research direction, it can pause and ask a human observer a question in real time. The question appears in the live agent log, and the observer types a response. The agent wakes up and continues with the new context.

If no human responds within five minutes, the agent times out, flags the gap honestly, and proceeds without fabricating an answer.

After the Run: Reviewer Context

When the brief is complete, flagged items are ranked by severity at the top. A reviewer can provide corrections, missing context, or policy clarification through a form. A focused mini-agent then rewrites the affected sections incorporating the new information.

The brief evolves in place. Flags are marked "resolved," and the reviewer can continue adding context even after all flags are addressed.

Real example from test run

The agent discovered that one of the applicants appeared to be a current employee of District 3, the very incubator being applied to (Can you guess who it was researching? 😉). It found this by cross-referencing the founder's portfolio site, schema.org metadata, and ZoomInfo data. Rather than guessing how to treat this, it paused and asked the human observer: "Should I treat this as a conflict of interest, as neutral context, or as something else?" The observer clarified that the founder had left D3 in January. The agent updated its analysis accordingly.

Where AI Stops

The AI handles all research, analysis, cross-referencing, and structured output. It is explicitly not responsible for any decision or communication. It cannot accept or reject an applicant, send an email, or route a startup to a stream lead.

The tools for these actions don't exist. This isn't a policy restriction that could be overridden by clever prompting. It's an architectural boundary. The agent can't cross the line because the line is a wall.

AI is responsible for

- All background research and fact-finding

- Competitive landscape analysis

- SDG alignment assessment

- Stream classification with reasoning

- Rubric scoring with justification

- Risk identification and flagging

- Generating interview questions

- Self-assessing its own work quality

- Honestly flagging what it can't resolve

Humans are responsible for

- All accept/reject decisions

- All outbound emails to founders

- The pitch meeting and deliberation

- Final stream assignment confirmation

- Whether to act on flagged items

- Reviewing and approving communication

the wall

The Critical Decision That Must Remain Human

Accept/reject after the pitch meeting. This is where institutional judgment, founder rapport, and cohort composition mix in ways that can't be replicated by an AI, no matter how clever the prompting. The brief gets the panel 90% of the way there. The last 10% is theirs.

Emergent Behaviour: What Nobody Programmed

Because the agent has tools, knowledge, and the freedom to reason about what it finds, it catches things that no scripted system would. Two examples from the test run:

Undisclosed Insider Connection

The agent discovered that one founder was a former D3 employee by cross-referencing their portfolio site's schema.org metadata, ZoomInfo results, and LinkedIn activity, then flagged the undisclosed relationship. Nobody programmed a "check if the applicant works at D3" step. The agent found it because it had the right tools and context to reason about what it was seeing.

Project Dormancy Detection

The agent pieced together from both founders' profiles, GitHub commit history, LinkedIn project dates, DNS lookup failures, and App Store searches that the project was likely dormant despite claims of a "working prototype used daily." Five independent data points, cross-referenced into a single finding.

These are the kinds of findings that justify the agentic approach. A scripted pipeline would check predetermined sources in a predetermined order. The sandbox lets the agent follow leads, cross-reference across sources, and surface patterns that emerge from the data.

What Changed: V1 to V2

V1 post-mortem

V1 findings

V1: Scripted Pipeline

- Gemini + Tavily, built with Codex

- Linear steps with retry logic

- Predetermined research order

- Broke when reality didn't match the script

- Wrong output worse than no output

V2: Sandbox Design

- Claude Agent SDK, custom MCP server

- Agent reasons about approach dynamically

- Self-assessment after each research phase

- Backtracking when new findings contradict earlier work

- Human-in-the-loop at two touchpoints

Key V1 Lessons That Shaped V2

Wrong output is worse than no output

Fabrication creates more work than gaps. The system must be honest about what it doesn't know.

Quality matters more than task completion

The agent needs a target state, not just a task list. Finishing all steps poorly is worse than flagging three steps as unresolvable.

Self-review loops are essential

Assess sufficiency before proceeding. If confidence is low, retry. If still low, flag for human review.

Domain knowledge is the differentiator

The knowledge base is what separates a useful agent from a generic research bot. Without D3's rubric and stream definitions, the agent can't make meaningful assessments.

You can't scrape LinkedIn

V1 relied heavily on LinkedIn data. V2 asks applicants for more profile URLs upfront, then uses a multi-strategy fetch cascade to get data from whatever sources are available.

What Breaks First at Scale

Trust calibration. The brief is good enough that ops might rubber-stamp instead of reviewing. The self-assessment loops and human review flags resist this. The system tells you when it's uncertain, but the real safeguard is organisational. Keeping humans accountable for the decisions the system explicitly refuses to make.

Other scaling concerns include API cost management at 200+ briefs per month, rate-limiting from external sources like LinkedIn, and the need for a proper database as the file-based storage approach won't hold under concurrent load. But the trust calibration problem is the most interesting because it's not a technical problem. It's a human one. The system is designed to make human oversight easier, but it can't force people to actually exercise it.

Why This Finally Got Built

I had been circling this idea since 2022, when it became obvious that a lot of legacy workflows were about to look embarrassingly pre-AI. District 3's admissions research process was one of them. High-volume, repetitive, cognitively messy, and still dependent on humans to stitch together context from scattered sources. It was exactly the kind of system I wanted to rebuild, but not the kind of internal project you can casually decide to spin up on your own.

The Wealthsimple application prompt was what actually made me build this; it asked for a real system, not a speculative deck, and it explicitly cared about where AI should take on responsibility and where it should stop. That was the kick in the pants I needed to finally build the thing properly.

What interested me most was the pattern, and how it can be applied to other use cases. This kind of high-volume review work with fragmented evidence, real operational pressure, and a decision boundary that still belongs to a human. That same pattern shows up in places like KYC and AML. I would not hand final judgment to the model, but I would trust it to assemble the case, surface inconsistencies, rank risk, and give a human reviewer a far better starting point with valuable time saved.